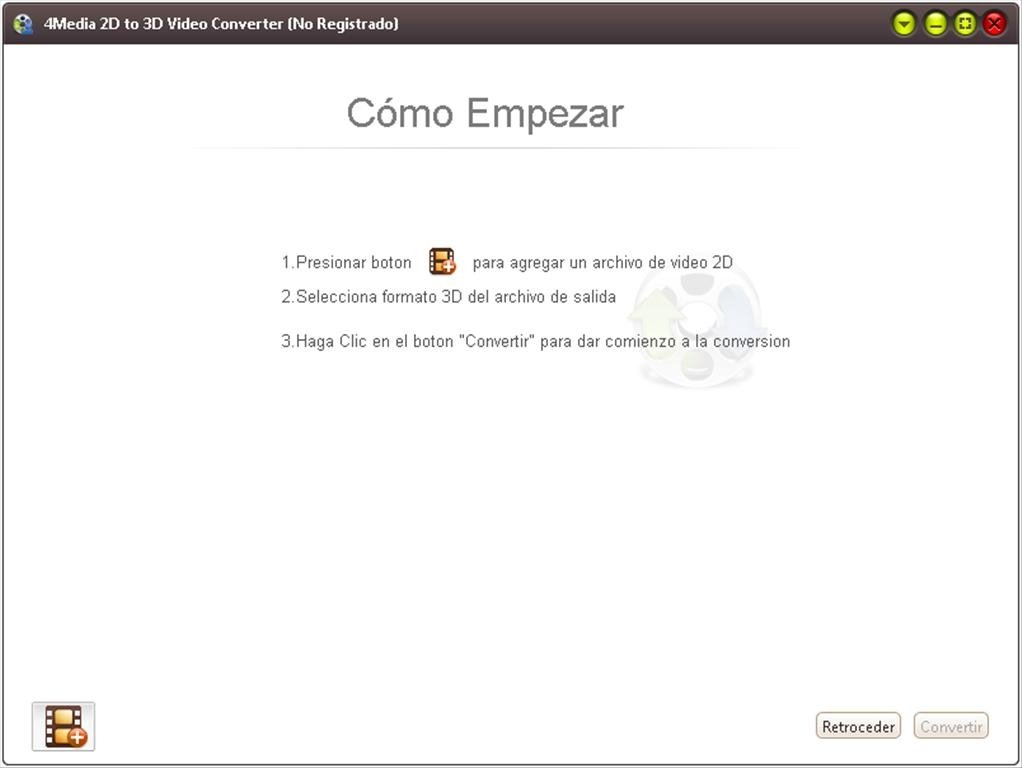

Complex but intuitive graphical interface It also comes with some editing tools that you can use on output files. It's a neat software solution that allows you to import, export and convert between different 3D file formats. PIFu: Pixel-aligned implicit function for high-resolution clothed human digitization.The Internet offers multiple applications and programs that you could use in order to convert various file types. Shunsuke Saito, Zeng Huang, Ryota Natsume, Shigeo Mor- ishima, Angjoo Kanazawa, and Hao Li. Step 3: Start the docker container sudo docker run -gpus all -it pifu/training bash Step 4: Run training for Geometry $ cd /home/user/PIFu $ python3 -m ain_shape -dataroot training/ -random_flip -random_scale -random_trans Step 5: Run training for Color python3 -m ain_color -dataroot training/ -num_sample_inout 0 -num_sample_color 5000 -sigma 0.1 -random_flip -random_scale -random_trans RUN pip install tqdm scikit-image pyembree trimeshĬOPY -from=0 /home/user/PIFu /home/user/PIFu git clone Step 2: Build the docker container for training cd 5fcfe5f67d290b817a9c77392b170fa6/ RUN DEBIAN_FRONTEND=noninteractive TZ=Etc/UTC apt-get -y install tzdata RUN rm /etc/apt//nvidia-ml.list & rm /etc/apt//cuda.list RUN export MESA_GL_VERSION_OVERRIDE=3.3 & python -m apps.render_data -i rp_dennis_posed_004_OBJ -o training -e RUN pip3 install opencv-python pyexr pyopengl RUN mkdir rp_dennis_posed_004_OBJ & unzip rp_dennis_posed_004_OBJ.zip -d rp_dennis_posed_004_OBJ RUN apt-get install -y gcc libopenexr-dev zlib1g-dev RUN apt-get update & apt-get install -y zip Step 1: Download the Dockerfile FROM mikedh/trimesh:latest The Dockerfile I created already executes the steps for downloading the sample Mesh and the data generation, so you should be able to do the training as soon as you manage to build the docker container.Īssuming that all the pre-requisites have been set up. PREREQUISITES(Windows 10):Īs explained in the PIFu Github website there are 3 stages for the training: If you don’t see ray_pyembree in the class package name, then you will be using the fallback calculation for ray tracing.

# check for `ray_pyembree` instead of `ray_triangle` The only way to find out is by doing: import pyembree

Trimesh won’t even warn you that it is not using Pyembree. Pyembree is a pain to install on Windows and most likely you will be running the data generation forever because if trimesh is installed without pyembree, it will take forever to do all the Ray tracing calculations on thousands of triangles. If you attempt to follow the manual steps as outlined on the PIFu website, beware. Installation instructions for the NVIDIA Container tookit can be found here. I was able to perform the data generation steps and execute the training of PIFU on a Windows 10 PC, with WSL Ubuntu 20 with the NVIDA Container Toolkit for allowing Docker access to my RTX 3070 GPU. Here is my result Training of Geometry and Color I suggest downloading Meshlab for quick viewing. You will need to open the obj file in a Mesh Viewer that supports color per vertex. Step 5: Execute notebook and download generated 3D model from /content/PIFu/results. Both the image and the mask should be 512×512 pixels. Step 4: Upload your images under /content/PIFu/sample_images, with the mask, as.

Step 2: Create a photo with the person you want to do the 3D scan Make sure you create a mask and that both images are 512×512 pixels. Step 1: Create a photo with the person you want to do the 3D scan. You can just run the notebook and open the mesh with MeshLab. You can ignore the code that creates a video file. I created a copy of the notebook where I downgrade the version of Pytorch to 1.4. The pre-trained model available was trained with Pytorch version 1.4, therefore for infering we also need to run Pytorch 1.4 Unfortunately, the Google Colab Notebook that you will see in the source code for PIFu doesn’t check which version of Pytorch is installed, so it currently doesn’t work. To try the demo the easiest is to use Google Colab. If you are feeling adventurous you can create your own dataset and train PIFu using the Dockerfile that I created for you. PIFU in theory can be trained to create 3D Models for any type of object, not just humans, but of course, you will need to train it with your own data. This is a short tutorial on how you can convert and train Pifu to convert a 2D photo to a 3D Model with color.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed